If the designer CLAIMED that he had designed such a amp, that, 2000 watts and zero distortion, then it would be a failure.

You are right. I used a bad analogy. It was bad because the Federal Trade Commission regulates what companies can say about power rating of amps. Sadly, they have no regulation over empty claims of zero jitter.

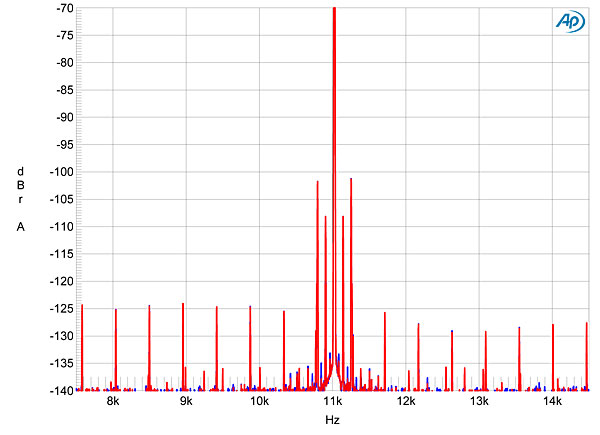

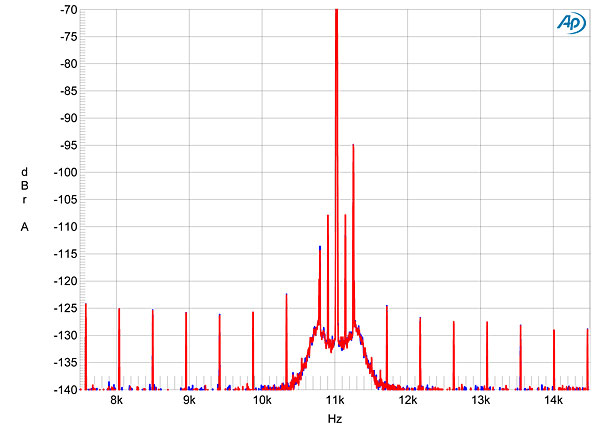

I have seen countless DACs claim they have no jitter or ridiculously low jitter. Yet they never back that with a measurement, nor do independent reviews show that to be the case.

But the process of creating a jitter free clock from jittery data in is a straightforward design exercise, executable with standard design techniques, and in fact the purveyors of many DACS claim the ability to to be able to deal with high levels of jitter.

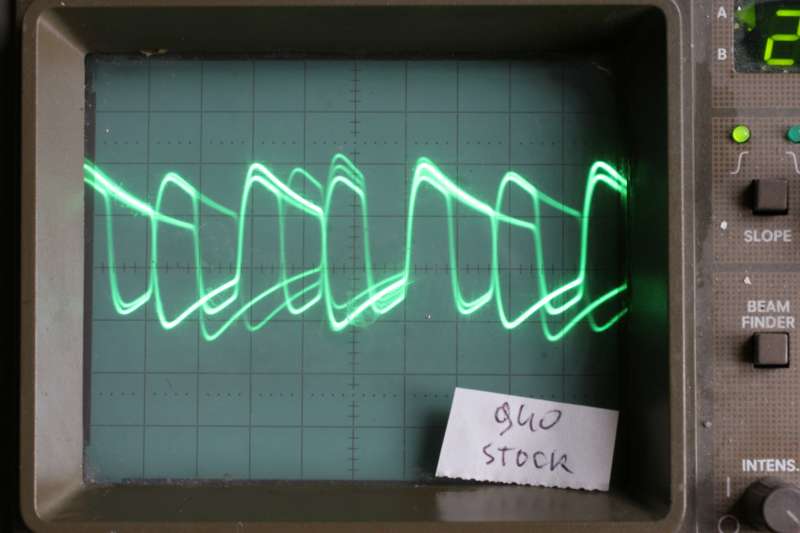

Straightforward? I consider PLL design to be the most challenging job in circuit design. It is part science, part black magic to get right. I have had to nearly fire engineers who after six months and three spins of their designs, couldn't get the PLL right to capture bits properly from SDI video connection over long cables. Tendency is to take cookbook diagrams from the IC company and use them but that never performs. You need to have excellent analog design skills including esoteric effects of PCB, power supply, coupling, etc.

And again, there is no shortage of "claims." There is a shortage of proof.

To repeat, Naim have solved the problem very nicely by sidestepping it: the DAC watches the data coming in and selects among a number of internal extremely stable clocks the one that best matches the incoming signal, the jitter is as low as how expensive a quartz clock the manufacturer wants to use ...

Frank

Have they really? Here is the web page for that DAC:

http://www.naimaudio.com/hifi-product-type/583. Please tell me why there is not one measurement proving that? I mean if they have achieved zero jitter, then they should be shouting from mountaintop with measurements of the same. We are not talking subjective things that can't be measured.

I spent an hour looking for measurements. Sadly, most of the reviews are subjective in nature and lack measurements. I did fine one with some data:

http://www.naimaudio.com/userfiles/modules/product/reviews/pdf/dac_hifi-world_jan2010.pdf

" Distortion levels were low at higher music levels but rose above

the expected value of 0.22% at -60dB, measuring a high 0.53% with a 16bit

signal. With a 24bit signal this figure should drop to below 0.1% or lower, but

with the DAC it remained a stubbornly high 0.38%,

so the DAC isn’t especially

linear in itself.

Output was a normal 2.3V and EIAJ dynamic range a modest 106dB due to

the mediocre performance at -60dB. The Naim DAC should sound smooth

enough. It isn’t a low distortion design however and is unimpressive here. "

Well, guess what? Jitter increases noise floor or harmonic distortion. So while we don't know for sure the cause of the high level of distortion, we certainly have some arrows pointing to less than perfect execution. If these things are so easy to do, then why the above results?

There are many ways jitter can get introduced into the DAC. Even if you separate the clocks completely as they claim, jitter can still bleed into it from the power rails, by EMI, RF, circuit leakage, etc, etc. This is why I said good PLLs are hard to design. heck, even the power supply ripple can create jitter independent of anything else.

Also note that if Naim can't match the rate to its internal clocks, it resorts to resampling the audio (interpolation). That will arguably degrade the audio more than jitter.

So no, nothing about this is simple. Unless you have a lot of gray hair and really, really understand high-performance PLL, analog and audio design, you are likely to get this more wrong than right.