Nice Review of DSD by Andreas Koch

- Thread starter MylesBAstor

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Unfortunately, the article is not a review but a marketing paper for DSD. The existence of a chart or two and a few technical terms does not elevate it to proper description of serious issues with the format which have been clearly documented and discussed: http://sjeng.org/ftp/SACD.pdf

The AES paper above is highly technical so people instead go by the simplistic tactics used in the marketing article mentioned here. Let me see if I can bring the concept down to a level that is easy to understand.

A digital system by definition is faulty as a representation of an analog system. It has a limit of resolution which analog in their does not. Let's look at the extreme case of having a 1 bit system. If I have an input music signal that goes from 0 to 1 volt and I represent it with just a 1 or 0, clearly I have huge amount of distortion. Any signal below 0.5 volt would be zero and anything above a 1. If we convert this to analog, we just get a set of squarewave pulses that represent huge amount of distortion.

As we increase the number of bits, then the distortion goes down. A 2 bit system will represent four levels so our output now is less of a square wave but stepped waveform. It still has huge amount of distortion but clearly, we have reduced it some by having more bits.

The above is not yet related to DSD issues. I will get to that in a minute. But the point is that one has to understand that digital cannot be a prefect representation of an analog system if it cannot have a smooth response that is free of distortion such as described.

The solution to above is called "dither." Dither is essentially noise. By adding this noise to our signal, we randomize the distortion product. Anything multiplied by a random number becomes a random number. So signal dependent distortion in the face of dither, becomes plain noise. While we hear noise, it is not nearly as bothersome as distortion.

Now, if we had a ton of noise in our digital systems, that would not be good either. So what we need here is to have enough noise added as to eliminate distortion, but not too much that all we would hear is noise.

A fancier scheme is called noise-shaping. This says that instead of putting dither noise at all frequencies equally, we could use more high at frequencies > 20 Khz, and hence, less at audible frequencies. This is also talked about in the marketing paper by Andreas.

With me so far? If not, think of dither as salt and pepper you put on your food to taste good!

With multi-bit PCM, such as CD's 16 bits, we have bits we can "donate" to dither. Remember, dither is noise so it needs to add its value to the input signal somehow. Depending on the amount of noise we need to add, we could eat up 1, 2 or even 3 bits of the input signal that way.

This brings us to the issue at hand. DSD uses only 1 bit total. It can be shown mathematically (and done in the paper that I referenced) that in a 1-bit system, we cannot add the amount of dither we need to turn the distortion into noise. Doing so causes the system to saturate like an amplifier clipping, and create distortion. The solution then is to add less and doing so means that we have then let some of the distortions in our digitization remain. Therefore, such a system can never be shown to be distortion free.

I will finish by quoting this bit from the AES paper:

"In recent years, we have seen the consumer audio industry perform a

remarkable feat of salesmanship by proclaiming that 1-bit converters

are better than multi-bit converters, and succeeding in marketing 1-

bit products as preferable for the highest-quality performance. The

original primary motivation for pursuing the 1-bit converter

architecture was not superior performance, but rather the fact that it

is cheaper to manufacture, consumes less power, and can operate

well at the voltages used in battery-powered portable equipment.

This has now become secondary, as 1-bit converters are currently

used in consumer audio equipment at all price and quality levels.

The manufacturers of high-quality converters struggled mightily to

produce 1-bit devices that met the performance goals of the industry.

But, they could never eliminate all the undesirable artefacts of such

converters, and after more than a decade of trying, they came to the

realization that they could produce better performance by using

multi-bit converter architectures in their products. The one inherent

advantage of the 1-bit architecture, namely its avoidance of the levelmatching difficulties found in multi-bit converters, turned out not to

be as significant a benefit as one might have thought. If one

examines the current data-sheets of all the major high-quality

converter manufacturers, one finds that they have almost universally

given up on the 1-bit sigma-delta topology in favor of oversampling

converters using more than two levels. Such converter architectures

can avoid the intractabilities of both the 1-bit and the 20+ -bit

designs. They can be properly dithered, and can thus be guaranteed

to be free of low-level, limit-cycle oscillations (“birdies”).

Moreover, they do not suffer from the high-level instability problems

of the higher-order, 1-bit sigma-delta converters. "

All of this said, I am cool with saying subjectively, DSD sounds great or even superior to PCM. When SACD and DVD-A came out, that was my observation. I have no explanation other than maybe like tubes, R2R and LP, we like these distortions. Or maybe it is the implementation that is at work here. Whatever the cause, it is improper to try to explain how technically DSD is superior to PCM where it can be shown readily that it is not. Indeed, the AES paper has the very same graph and simulations used in Andreas marketing material here but goes on to show how that exact system is subject to distortion and oscillations.

The AES paper above is highly technical so people instead go by the simplistic tactics used in the marketing article mentioned here. Let me see if I can bring the concept down to a level that is easy to understand.

A digital system by definition is faulty as a representation of an analog system. It has a limit of resolution which analog in their does not. Let's look at the extreme case of having a 1 bit system. If I have an input music signal that goes from 0 to 1 volt and I represent it with just a 1 or 0, clearly I have huge amount of distortion. Any signal below 0.5 volt would be zero and anything above a 1. If we convert this to analog, we just get a set of squarewave pulses that represent huge amount of distortion.

As we increase the number of bits, then the distortion goes down. A 2 bit system will represent four levels so our output now is less of a square wave but stepped waveform. It still has huge amount of distortion but clearly, we have reduced it some by having more bits.

The above is not yet related to DSD issues. I will get to that in a minute. But the point is that one has to understand that digital cannot be a prefect representation of an analog system if it cannot have a smooth response that is free of distortion such as described.

The solution to above is called "dither." Dither is essentially noise. By adding this noise to our signal, we randomize the distortion product. Anything multiplied by a random number becomes a random number. So signal dependent distortion in the face of dither, becomes plain noise. While we hear noise, it is not nearly as bothersome as distortion.

Now, if we had a ton of noise in our digital systems, that would not be good either. So what we need here is to have enough noise added as to eliminate distortion, but not too much that all we would hear is noise.

A fancier scheme is called noise-shaping. This says that instead of putting dither noise at all frequencies equally, we could use more high at frequencies > 20 Khz, and hence, less at audible frequencies. This is also talked about in the marketing paper by Andreas.

With me so far? If not, think of dither as salt and pepper you put on your food to taste good!

With multi-bit PCM, such as CD's 16 bits, we have bits we can "donate" to dither. Remember, dither is noise so it needs to add its value to the input signal somehow. Depending on the amount of noise we need to add, we could eat up 1, 2 or even 3 bits of the input signal that way.

This brings us to the issue at hand. DSD uses only 1 bit total. It can be shown mathematically (and done in the paper that I referenced) that in a 1-bit system, we cannot add the amount of dither we need to turn the distortion into noise. Doing so causes the system to saturate like an amplifier clipping, and create distortion. The solution then is to add less and doing so means that we have then let some of the distortions in our digitization remain. Therefore, such a system can never be shown to be distortion free.

I will finish by quoting this bit from the AES paper:

"In recent years, we have seen the consumer audio industry perform a

remarkable feat of salesmanship by proclaiming that 1-bit converters

are better than multi-bit converters, and succeeding in marketing 1-

bit products as preferable for the highest-quality performance. The

original primary motivation for pursuing the 1-bit converter

architecture was not superior performance, but rather the fact that it

is cheaper to manufacture, consumes less power, and can operate

well at the voltages used in battery-powered portable equipment.

This has now become secondary, as 1-bit converters are currently

used in consumer audio equipment at all price and quality levels.

The manufacturers of high-quality converters struggled mightily to

produce 1-bit devices that met the performance goals of the industry.

But, they could never eliminate all the undesirable artefacts of such

converters, and after more than a decade of trying, they came to the

realization that they could produce better performance by using

multi-bit converter architectures in their products. The one inherent

advantage of the 1-bit architecture, namely its avoidance of the levelmatching difficulties found in multi-bit converters, turned out not to

be as significant a benefit as one might have thought. If one

examines the current data-sheets of all the major high-quality

converter manufacturers, one finds that they have almost universally

given up on the 1-bit sigma-delta topology in favor of oversampling

converters using more than two levels. Such converter architectures

can avoid the intractabilities of both the 1-bit and the 20+ -bit

designs. They can be properly dithered, and can thus be guaranteed

to be free of low-level, limit-cycle oscillations (“birdies”).

Moreover, they do not suffer from the high-level instability problems

of the higher-order, 1-bit sigma-delta converters. "

All of this said, I am cool with saying subjectively, DSD sounds great or even superior to PCM. When SACD and DVD-A came out, that was my observation. I have no explanation other than maybe like tubes, R2R and LP, we like these distortions. Or maybe it is the implementation that is at work here. Whatever the cause, it is improper to try to explain how technically DSD is superior to PCM where it can be shown readily that it is not. Indeed, the AES paper has the very same graph and simulations used in Andreas marketing material here but goes on to show how that exact system is subject to distortion and oscillations.

Excellent post, Amir. I am reminded of the comparison we did at Music Lovers with Cookie Marenco playing the exact same violin track in RBCD and SACD formats and the SACD sounded *smoother*, sort of buttery, more *analog* as compared to the RBCD. Most in attendance preferred the sound of the SACD, but 3 violin players in attendance all agreed the RBCD replicated the sound of the violin more accurately precisely because of the lack of this seemingly added coloration. Qualifications: exact same gear (pre, amp, speakers, player), matched volume levels, multiple comparisons, but not double blind.

Excellent post, Amir. I am reminded of the comparison we did at Music Lovers with Cookie Marenco playing the exact same violin track in RBCD and SACD formats and the SACD sounded *smoother*, sort of buttery, more *analog* as compared to the RBCD. Most in attendance preferred the sound of the SACD, but 3 violin players in attendance all agreed the RBCD replicated the sound of the violin more accurately precisely because of the lack of this seemingly added coloration. Qualifications: exact same gear (pre, amp, speakers, player), matched volume levels, multiple comparisons, but not double blind.

That's not an entirely valid comparison unless one is using a "pure" DSD file like Cookie (or Bruce).

Bruce B

WBF Founding Member, Pro Audio Production Member

Now how to get Puremusic USB out to the AT&T glass inputs of my 2 DCC2 SEs. Arggggghhhhhhh.

Actually it's easier than you think. I'm using Merging's Emotion/Mykerinos SDIF-3 -> Grimm OC1 -> Playback Designs or EMM Labs DAC via AT&T ST-optical

You'll just need the part in blue

Unfortunately, the article is not a review but a marketing paper for DSD. The existence of a chart or two and a few technical terms does not elevate it to proper description of serious issues with the format which have been clearly documented and discussed: http://sjeng.org/ftp/SACD.pdf

The AES paper above is highly technical so people instead go by the simplistic tactics used in the marketing article mentioned here. Let me see if I can bring the concept down to a level that is easy to understand.

A digital system by definition is faulty as a representation of an analog system. It has a limit of resolution which analog in their does not. Let's look at the extreme case of having a 1 bit system. If I have an input music signal that goes from 0 to 1 volt and I represent it with just a 1 or 0, clearly I have huge amount of distortion. Any signal below 0.5 volt would be zero and anything above a 1. If we convert this to analog, we just get a set of squarewave pulses that represent huge amount of distortion.

As we increase the number of bits, then the distortion goes down. A 2 bit system will represent four levels so our output now is less of a square wave but stepped waveform. It still has huge amount of distortion but clearly, we have reduced it some by having more bits.

The above is not yet related to DSD issues. I will get to that in a minute. But the point is that one has to understand that digital cannot be a prefect representation of an analog system if it cannot have a smooth response that is free of distortion such as described.

The solution to above is called "dither." Dither is essentially noise. By adding this noise to our signal, we randomize the distortion product. Anything multiplied by a random number becomes a random number. So signal dependent distortion in the face of dither, becomes plain noise. While we hear noise, it is not nearly as bothersome as distortion.

Now, if we had a ton of noise in our digital systems, that would not be good either. So what we need here is to have enough noise added as to eliminate distortion, but not too much that all we would hear is noise.

A fancier scheme is called noise-shaping. This says that instead of putting dither noise at all frequencies equally, we could use more high at frequencies > 20 Khz, and hence, less at audible frequencies. This is also talked about in the marketing paper by Andreas.

With me so far? If not, think of dither as salt and pepper you put on your food to taste good!

With multi-bit PCM, such as CD's 16 bits, we have bits we can "donate" to dither. Remember, dither is noise so it needs to add its value to the input signal somehow. Depending on the amount of noise we need to add, we could eat up 1, 2 or even 3 bits of the input signal that way.

This brings us to the issue at hand. DSD uses only 1 bit total. It can be shown mathematically (and done in the paper that I referenced) that in a 1-bit system, we cannot add the amount of dither we need to turn the distortion into noise. Doing so causes the system to saturate like an amplifier clipping, and create distortion. The solution then is to add less and doing so means that we have then let some of the distortions in our digitization remain. Therefore, such a system can never be shown to be distortion free.

I will finish by quoting this bit from the AES paper:

"In recent years, we have seen the consumer audio industry perform a

remarkable feat of salesmanship by proclaiming that 1-bit converters

are better than multi-bit converters, and succeeding in marketing 1-

bit products as preferable for the highest-quality performance. The

original primary motivation for pursuing the 1-bit converter

architecture was not superior performance, but rather the fact that it

is cheaper to manufacture, consumes less power, and can operate

well at the voltages used in battery-powered portable equipment.

This has now become secondary, as 1-bit converters are currently

used in consumer audio equipment at all price and quality levels.

The manufacturers of high-quality converters struggled mightily to

produce 1-bit devices that met the performance goals of the industry.

But, they could never eliminate all the undesirable artefacts of such

converters, and after more than a decade of trying, they came to the

realization that they could produce better performance by using

multi-bit converter architectures in their products. The one inherent

advantage of the 1-bit architecture, namely its avoidance of the levelmatching difficulties found in multi-bit converters, turned out not to

be as significant a benefit as one might have thought. If one

examines the current data-sheets of all the major high-quality

converter manufacturers, one finds that they have almost universally

given up on the 1-bit sigma-delta topology in favor of oversampling

converters using more than two levels. Such converter architectures

can avoid the intractabilities of both the 1-bit and the 20+ -bit

designs. They can be properly dithered, and can thus be guaranteed

to be free of low-level, limit-cycle oscillations (“birdies”).

Moreover, they do not suffer from the high-level instability problems

of the higher-order, 1-bit sigma-delta converters. "

All of this said, I am cool with saying subjectively, DSD sounds great or even superior to PCM. When SACD and DVD-A came out, that was my observation. I have no explanation other than maybe like tubes, R2R and LP, we like these distortions. Or maybe it is the implementation that is at work here. Whatever the cause, it is improper to try to explain how technically DSD is superior to PCM where it can be shown readily that it is not. Indeed, the AES paper has the very same graph and simulations used in Andreas marketing material here but goes on to show how that exact system is subject to distortion and oscillations.

Amir: Of course, every medium has its own particular/peculiar set of "distortions." It's just which distortions are kinder to the ear

Anyway, my SACDs are my best part of my music listening experience collection. :b

JVC XRCD24, right along that path (pack). :b

JVC XRCD24, right along that path (pack). :b

True, to an extent. To the average consumer, however, and to almost all audiophiles who have engaged in these comparisons and proclaimed SACD king, including some of our current membership, it should give one pause for thought.That's not an entirely valid comparison unless one is using a "pure" DSD file like Cookie (or Bruce).

Bruce B

WBF Founding Member, Pro Audio Production Member

For DAC's you'll also need to include the Mytek, Digital Audio Denmark, Prism and the EMM Labs which were overlooked.

Bruce B

WBF Founding Member, Pro Audio Production Member

Bruce, have you done a conversion from PCM to DSD and found the latter to sound better?

Only on low level music. Something with snappy transients also works well too, like a drum solo.

Just confirming, you started with PCM digital files and conversion to DSD made them sound better than playing as PCM?

I'm sorry to disagree with the sentiments here, but theoretically distortion in digital is inaudible. Simple maths, no problems to get a digital system these days to measure less than 100dB distortion on a full level signal. Now, extremely few people run their their systems where peak sound level hits 100dB at the listening position. Which means that distortion is at 0dB, inaudible. At lower sound levels the situation actually improves, the sound level of the distortion is even less

So IF you hear distortion then the measurements are wrong, or something else is going on ...

Frank

So IF you hear distortion then the measurements are wrong, or something else is going on ...

Frank

Bruce B

WBF Founding Member, Pro Audio Production Member

Just confirming, you started with PCM digital files and conversion to DSD made them sound better than playing as PCM?

That is correct... only on those types of files.... IMHO...

DSD uses only 1 bit total. It can be shown mathematically (and done in the paper that I referenced) that in a 1-bit system, we cannot add the amount of dither we need to turn the distortion into noise. Doing so causes the system to saturate like an amplifier clipping, and create distortion. The solution then is to add less and doing so means that we have then let some of the distortions in our digitization remain. Therefore, such a system can never be shown to be distortion free.

I read your post with great interest. However, a couple of things jumped at me, and here's the first... The distortion in a PCM scheme can't be the same or of the same magnitude as DSD, can it? The above leads one to believe that *audible* digitization distortion remains in the DSD stream, but how true is that at the sampling rates it operates? Is there supporting data on this?

All of this said, I am cool with saying subjectively, DSD sounds great or even superior to PCM. When SACD and DVD-A came out, that was my observation. I have no explanation other than maybe like tubes, R2R and LP, we like these distortions. Or maybe it is the implementation that is at work here.

Could it also then be that the distortions are simply different, audible and annoying in one case but not in the other? For example, the difference between, say, odd-harmonics vs. even-harmonics? Or could it be that quantization distortion isn't at play here, but rather other things like pre-ringing in PCM brickwall filter implementations which may be absent from DSD? My point is that unless we can understand why DSD may have sounded superior to PCM, is it fair to say that "it is improper to try to explain how technically DSD is superior to PCM where it can be shown readily that it is not"???

And finally, when Bruce converted PCM to DSD and found the latter sounded better than the original, could it be that the lack of distortions like pre-ringing during the conversion to DSD and presumably upon final analog conversion caused the better-than-the-original effect?

If so, shouldn't we then be looking at PCM vs DSD and comparing them as complete solutions that include whatever filters, noise-shaping, distortion types et al may be necessary, and not just solely based on quantization distortion? The Koch article certainly appears to be taking such a broader view...

Last edited:

Actually it's easier than you think. I'm using Merging's Emotion/Mykerinos SDIF-3 -> Grimm OC1 -> Playback Designs or EMM Labs DAC via AT&T ST-optical

You'll just need the part in blue

Muchas Gracias Bruce, I'll look into it!

It is not. Multi-bit PCM quantization noise can be completely eliminated because we have so much headroom. Indeed, 24-bit audio is "self-dithering" in that there is natural noise in the right order bits so even out of the box, it is distortion free. If one wanted to have lower noise floor still, the same noise shaping used in DSD can be utilized to push the noise to ultrasonics.I read your post with great interest. However, a couple of things jumped at me, and here's the first... The distortion in a PCM scheme can't be the same or of the same magnitude as DSD, can it?

The AES paper has good simulations of this. It shows that when driving by one or two pure tones, there are harmonic distortions that are just 32 db below the signal! Of course, the frequency by then is well into ultrasonics. So how audible those distortions are is subject to debate. More on this below. PCM on the other hand, has no harmonic distortion so is completely superior in this regard. The bats get to enjoy PCM far more than they do DSDThe above leads one to believe that *audible* digitization distortion remains in the DSD stream, but how true is that at the sampling rates it operates? Is there supporting data on this?

Well, per above, PCM does not have distortion. Due to the fact that we can dither multi-bit PCM, we have no distortion at all. So it is a curious case that a system with distortion would be preferred.Could it also then be that the distortions are simply different, audible and annoying in one case but not in the other?

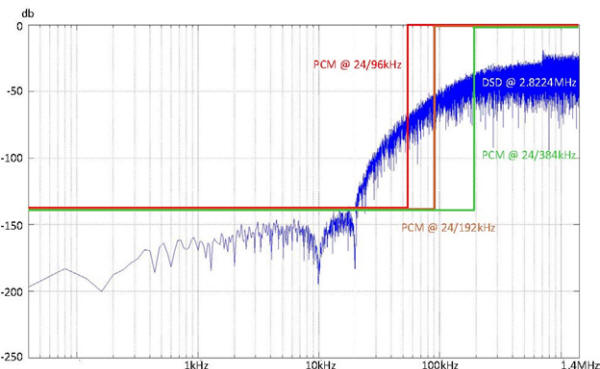

Andreas' article talks about that. But with high sampling rate PCM, we are free of that in the audible band. This is the point I wanted to make earlier. See this graph from the article:For example, the difference between, say, odd-harmonics vs. even-harmonics? Or could it be that quantization distortion isn't at play here, but rather other things like pre-ringing in PCM brickwall filter implementations which may be absent from DSD?

The noise above 20 Khz in DSD escalates at the rate of *140* db per decade!

This is the most important point. The AES article touches on it. It says that maybe the reason we like DSD is because the ultrasonic distortion in our speakers and amps, causes them to sound different in normal audio band.My point is that unless we can understand why DSD may have sounded superior to PCM, is it fair to say that "it is improper to try to explain how technically DSD is superior to PCM where it can be shown readily that it is not"???

It is also possible that somehow our ear responds differently when excited by ultrasonic content. This would explain why we don't hear it but why it might have an effect. In this scenario, it is entirely possible that what we want to hear is not the original content of music but the distorted version. Perhaps this is why analog sounds good because it probably has a lot of junk going on there too. I am making this stuff up as I go of course

Again, high sampling rate PCM should not have an issue here. And at any rate, DSD is often filtered also at playback. If that is a digital filter, it will also ring.And finally, when Bruce converted PCM to DSD and found the latter sounded better than the original, could it be that the lack of distortions like pre-ringing during the conversion to DSD and presumably upon final analog conversion caused the better-than-the-original effect?

As I mentioned, the Koch article is marketing material. It makes statements like "it is proven we can hear 100 Khz" impulse or some such thing. What proof? Proof needs to be presented with references and there is none here. And then he moves on to show the above graph. If we care up to 100 Khz, why would we want the system response to look like that? Did the test that prove 100 Khz was audible use such a distorted response?If so, shouldn't we then be looking at PCM vs DSD and comparing them as complete solutions that include whatever filters, noise-shaping, distortion types et al may be necessary, and not just solely based on quantization distortion? The Koch article certainly appears to be taking such a broader view...

Bottom line is that DSD system is not linear. And can have tones and noise floor that is modulated by the input signal. Its foundation came from an approach to make DACs/ADC converters cheaper to make. And it came at a time when both Sony and Philips CD patents were expiring and they had to cook up something proprietary.

I was at the AES conference where a panel consisting of the authors in the AES paper (professors Lipshitz and Vanderkooy) and Sony/Philips were present. The content of the paper was shown using proper technical/mathematical analysis. Then everyone turned to the Sony/Philips folks and I was surprised that they provided no defense other than it sounds good anyway! Philips did present a counterpoint in the next conference but I have to say, despite preferring the sound of DSD, it was a let down. It was clear that smart signal processing people were not in the design loop of DSD.

If I surveyed 10 signal processing expert, I suspect every one of them would say DSD is flawed and has no advantage over PCM. I have yet to see anyone other than Sony/Philips folks praise it. There may be some that are out there but I have not seen them.

Hi

I have always found DSD claims of superiority to PCM suspect. I have also stated here on the WBF (and elsewhere) that I find the whole process flawed and wasteful .. I have never found a clearer explanation that Amir's here. This to me settles the debate. Also read the paper by Koch and my conclusion is that it is pure marketing.. One that doesn't hold much water to even scant scrutiny.

Ron mention of people preferring the sound and the professional acknowledging that the PCM stream reproduced the violins beter is in line m with my experience of several well -regarded recording or systems whose reproduction of violins is that of a overly syrupy instrument. Violins are often not well reproduced in many systems... You are free to think what type of electronics or sources those systems are using ...

Wile PCM is not perfect, far from it, it is IMO the best we have in digital right now. I am not sure where the limits are but they seem to be well above our hearing abilities.

Thanks Amir for an extraordinary well written post on the subject.

I have always found DSD claims of superiority to PCM suspect. I have also stated here on the WBF (and elsewhere) that I find the whole process flawed and wasteful .. I have never found a clearer explanation that Amir's here. This to me settles the debate. Also read the paper by Koch and my conclusion is that it is pure marketing.. One that doesn't hold much water to even scant scrutiny.

Ron mention of people preferring the sound and the professional acknowledging that the PCM stream reproduced the violins beter is in line m with my experience of several well -regarded recording or systems whose reproduction of violins is that of a overly syrupy instrument. Violins are often not well reproduced in many systems... You are free to think what type of electronics or sources those systems are using ...

Wile PCM is not perfect, far from it, it is IMO the best we have in digital right now. I am not sure where the limits are but they seem to be well above our hearing abilities.

Thanks Amir for an extraordinary well written post on the subject.

Similar threads

- Replies

- 7

- Views

- 2K

- Replies

- 4

- Views

- 3K

- Replies

- 9

- Views

- 989

- Replies

- 2

- Views

- 281

| Steve Williams Site Founder | Site Owner | Administrator | Ron Resnick Site Owner | Administrator | Julian (The Fixer) Website Build | Marketing Managersing |